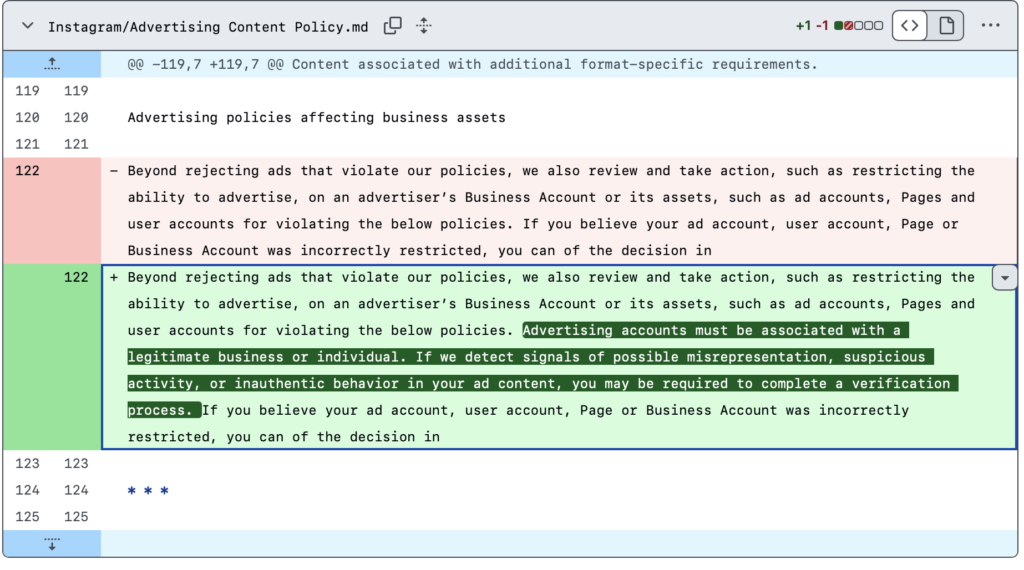

On March 23, 2026, Meta updated its Advertising Content Policy, indicating that advertising accounts must be associated with a legitimate business or individual.

Last October, a report from Investigate Europe showed how scammers exploit EU regulatory loopholes and the lack of platform liability that enables, for instance, the spread of financial advertisements using celebrity deepfakes. In relation, the last November, Reuter Investigation revealed that Meta’s internal documents shows 10% of its 2024 revenue (~$16 billion) would come from scams and banned goods as Meta platforms are showing approximately 15 billion scam ads to users per day.

In an apparent response to increased concern regarding the issue, on March 11, 2026 Meta announced that it was launching new AI tools to prevent scams, such as those involving “Celeb-Bait” and “Brand Impersonation”.

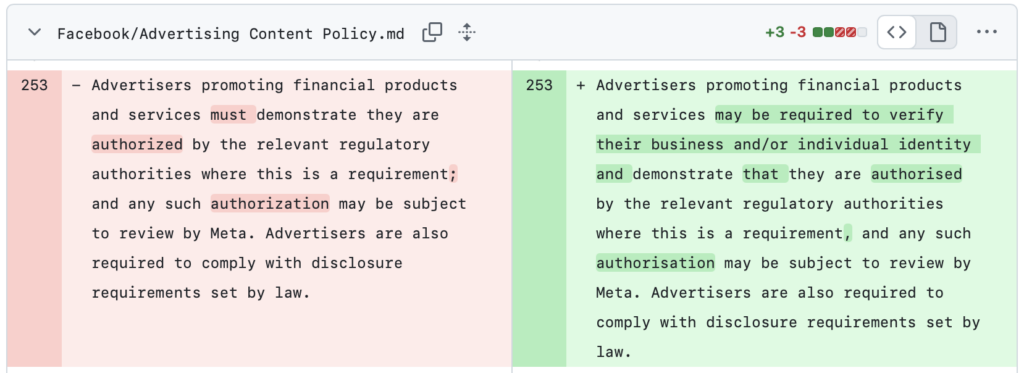

At the same time, in the Facebook update, Meta replaced “must” clause with “may be”.

Thus the bottom line is: Meta is shifting toward stricter verification. Meaning: advertisers must verify their legitimacy. If the platform’s new AI detection tools (or other signals) flag an account as suspicious, that advertiser will be required to undergo an additional verification process to prove they’re legit.

The big question is: How effective will these measures be? We’ll have to wait and see if this extra layer of scrutiny actually cracks down on fraud.

🔗 Link to the versions:

- Facebook, Advertising Content Policy: https://github.com/OpenTermsArchive/pga-versions/commit/aa720923035f38bced043c9290aa6a757e78270c

- Instagram, Advertising Content Policy: https://github.com/OpenTermsArchive/pga-versions/commit/18f735ec96b4da4edee8d494def440306e420add

Author: Ayşe Darakçı